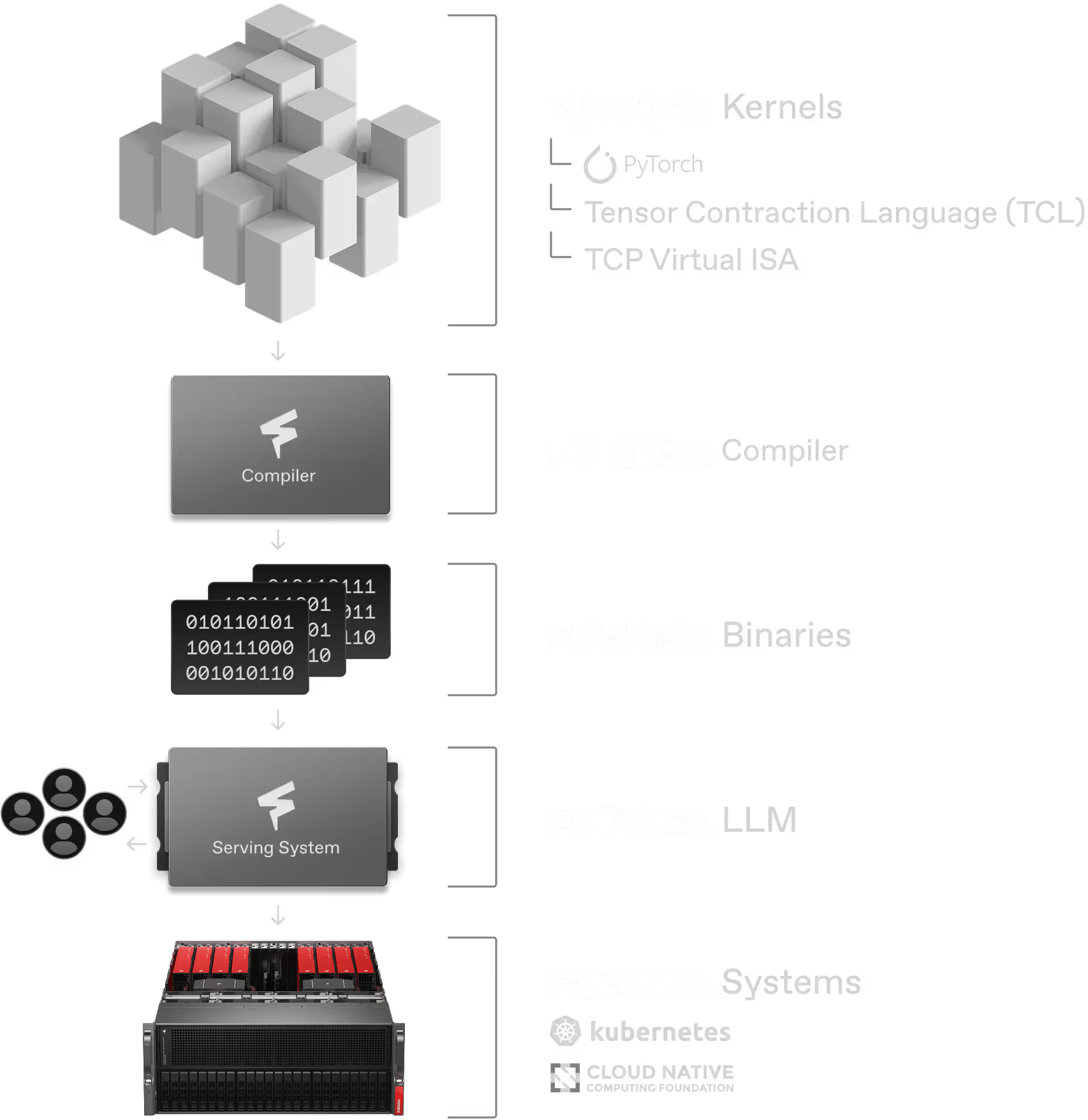

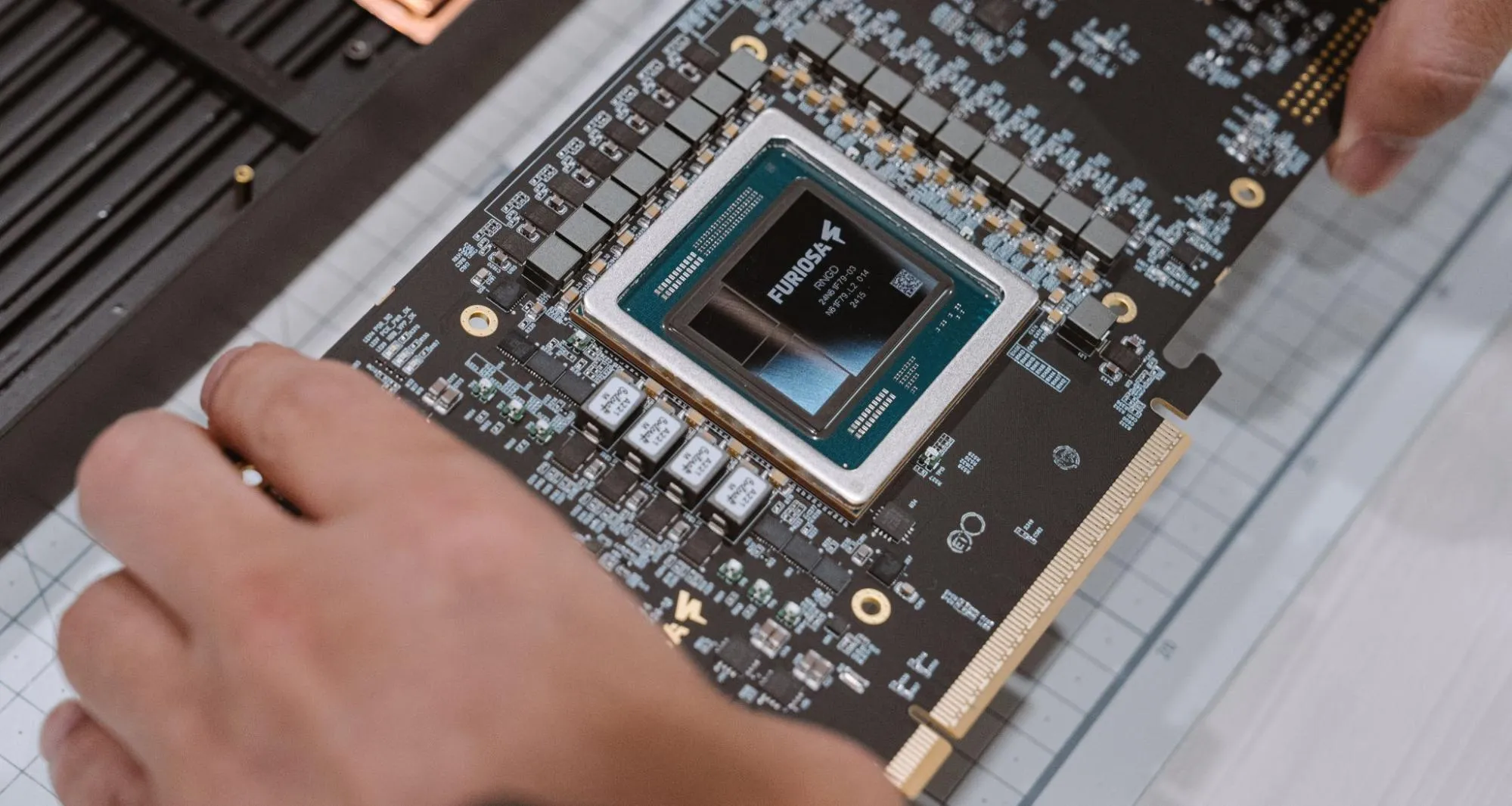

The software stack activating RNGD performance

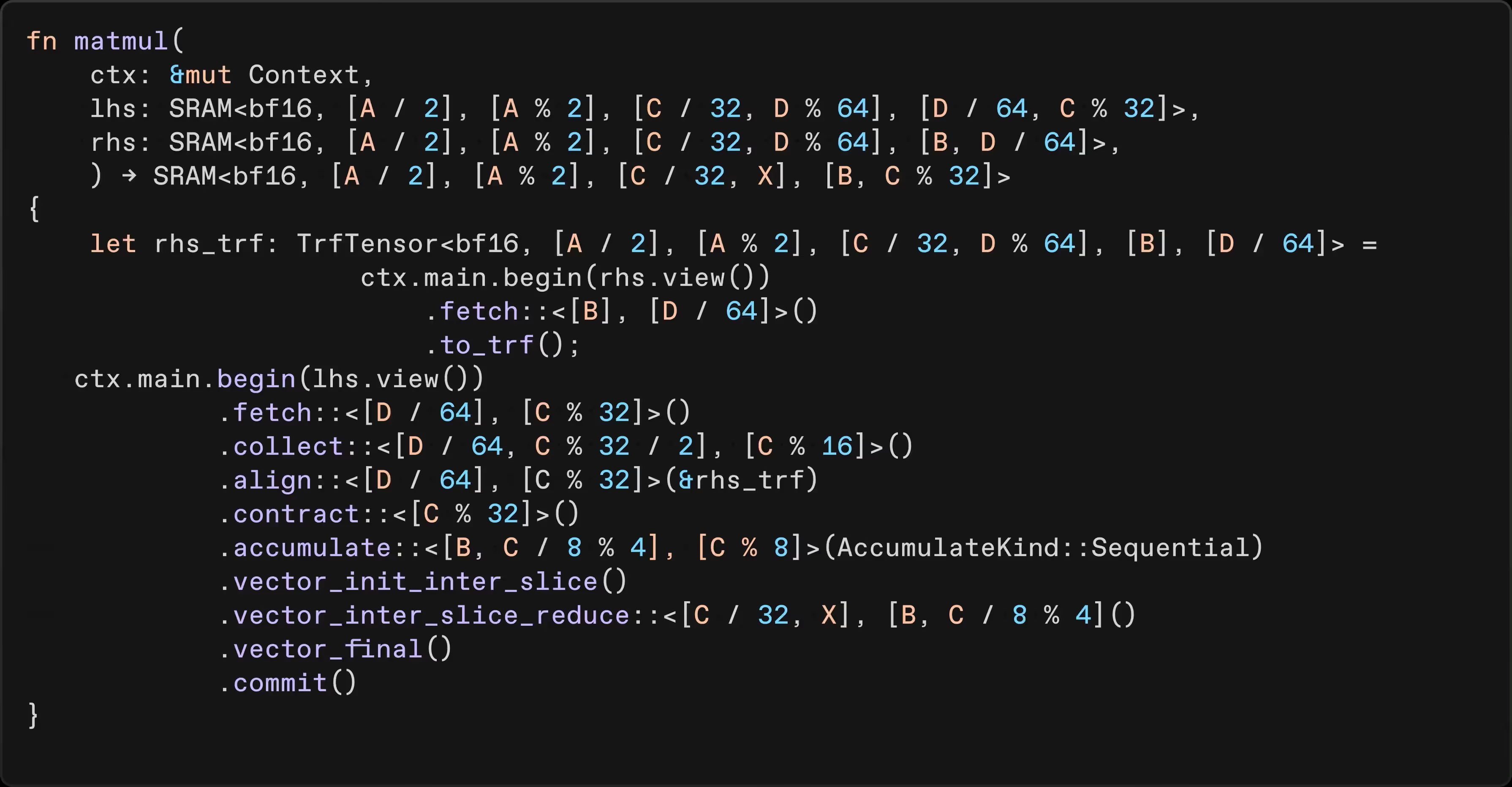

Radical efficiency across compilation and execution

The GPU bottleneck: Intractable search spaces

The tensor-native advantage: Global optimization via shapes and tactics

TCP advantages: Accurate cost model for compiler optimizations

Reference applications available on GitHub

Blog

Experience RENEGADE Summit 2026

RNGD outperforms RTX Pro 6000 with the latest SDK