Powerfully efficient AI inference for enterprise and cloud

#1 EFFICIENT LLAMA INFERENCE

token/s/W

Llama 3.1 70B

2,048 input tokens / 128 output tokens / x 8 cards

rngd

FuriosaSDK / FP8 / 957.05 token/s

H100 SXM

TensorRT-LLM 0.15.0 / FP8 / 2,064.53 token/s

L40S

TensorRT-LLM 0.15.0 / FP8 / 163.53 token/s

token/s/W

Llama 3.1 8B

128 input tokens / 4,096 output tokens / x 1 card

rngd

FuriosaSDK / FP8 / 3,935.25 token/s

H100 SXM

TensorRT-LLM 0.15.0 / FP8 / 13,222.06 token/s

L40S

TensorRT-LLM 0.15.0 / FP8 / 2989.17 token/s

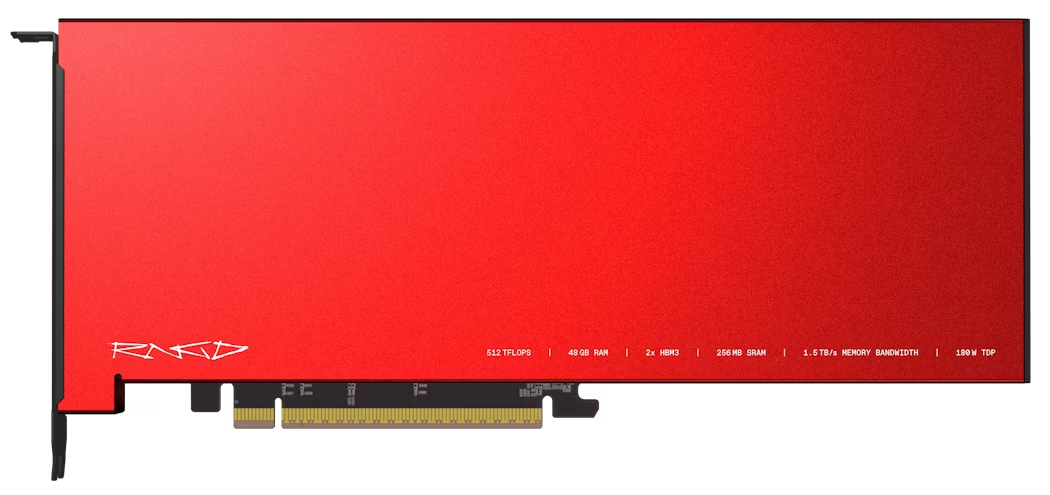

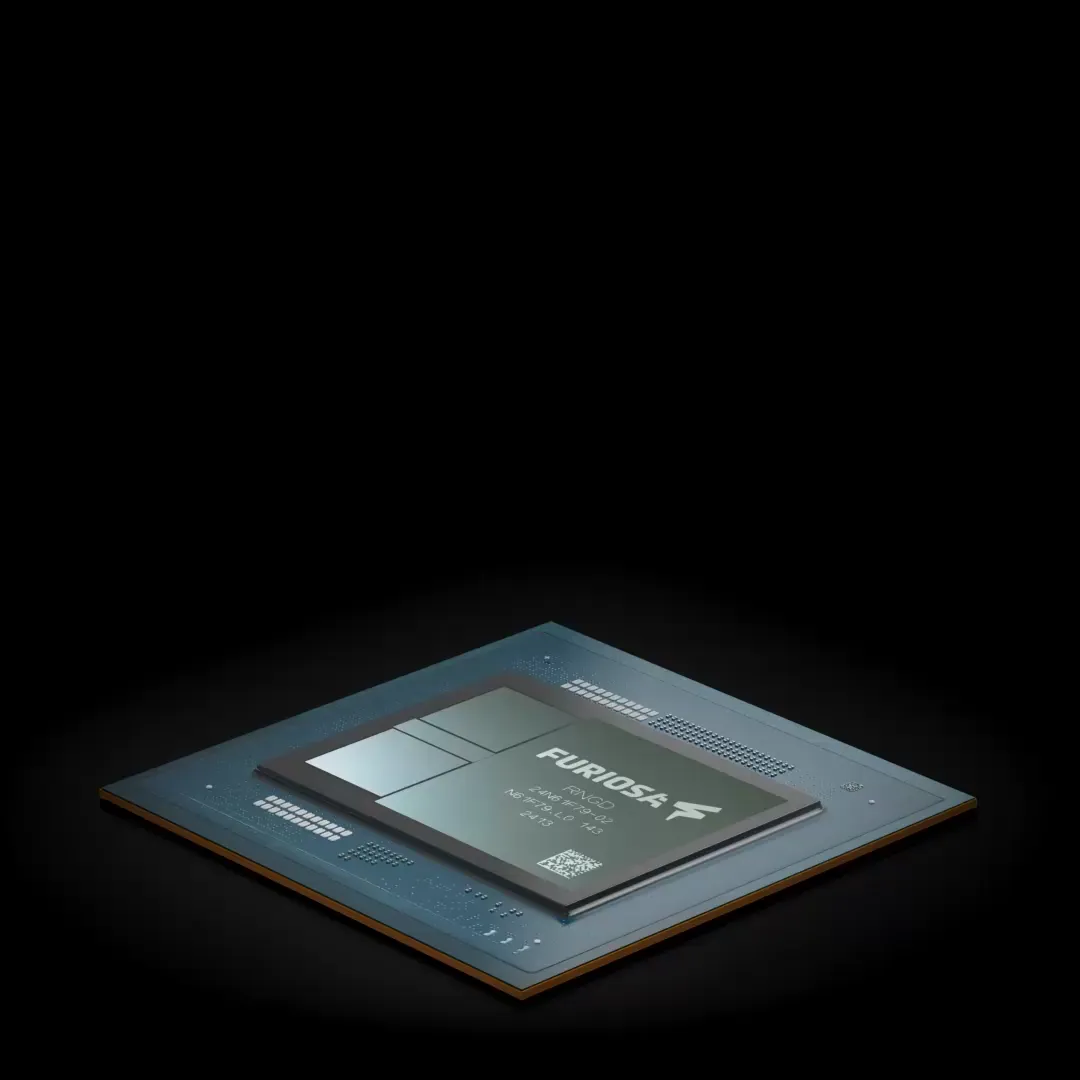

RNGD

H100 SXM

L40S

Technology

TSMC 5nm

TSMC 4nm

TSMC 5nm

BF16/FP8 (TFLOPS)

256/512

989/1979

362/733

INT8/INT4 (TOPS)

512/1024

1979/-

733/733

Memory Capacity (GB)

48

80

48

Memory Bandwidth (TB/s)

1.5

3.35

0.86

Host I/F

Gen5 x16

Gen5 x16

Gen4 x16

TDP (W)

180

700

350

Disclaimer: Measurements by FuriosaAI internally on current specifications and/or internal engineering calculations. Nvidia results were retrieved from Nvidia website, https://github.com/NVIDIA/Tens..... /perf-overview.md, on Aug 25, 2024.

EFFICIENT AI INFERENCE IS HERE

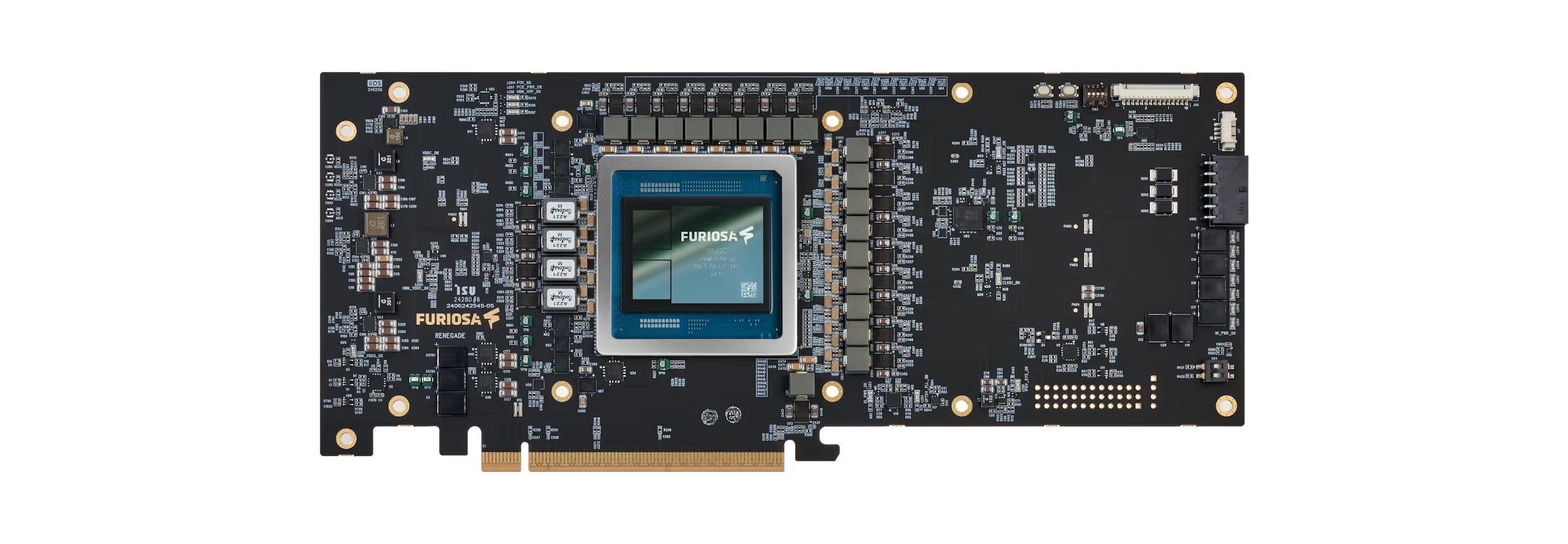

Tensor contraction, not matmul

RNGD breaks away from that, unlocking powerful performance and efficiency.

Tensor Contraction Processor

Tensor mapping for max utilization

This fundamental design choice streamlines programming, maximizing parallelism and data reuse, while providing flexibility and reconfigurability of compute and maximizes memory resources based on tensor shapes.

Furiosa Compiler leverages this flexibility and reconfigurability of hardware to select the most optimized tactics, delivering powerful and efficient deep learning acceleration for all scales of deployment.

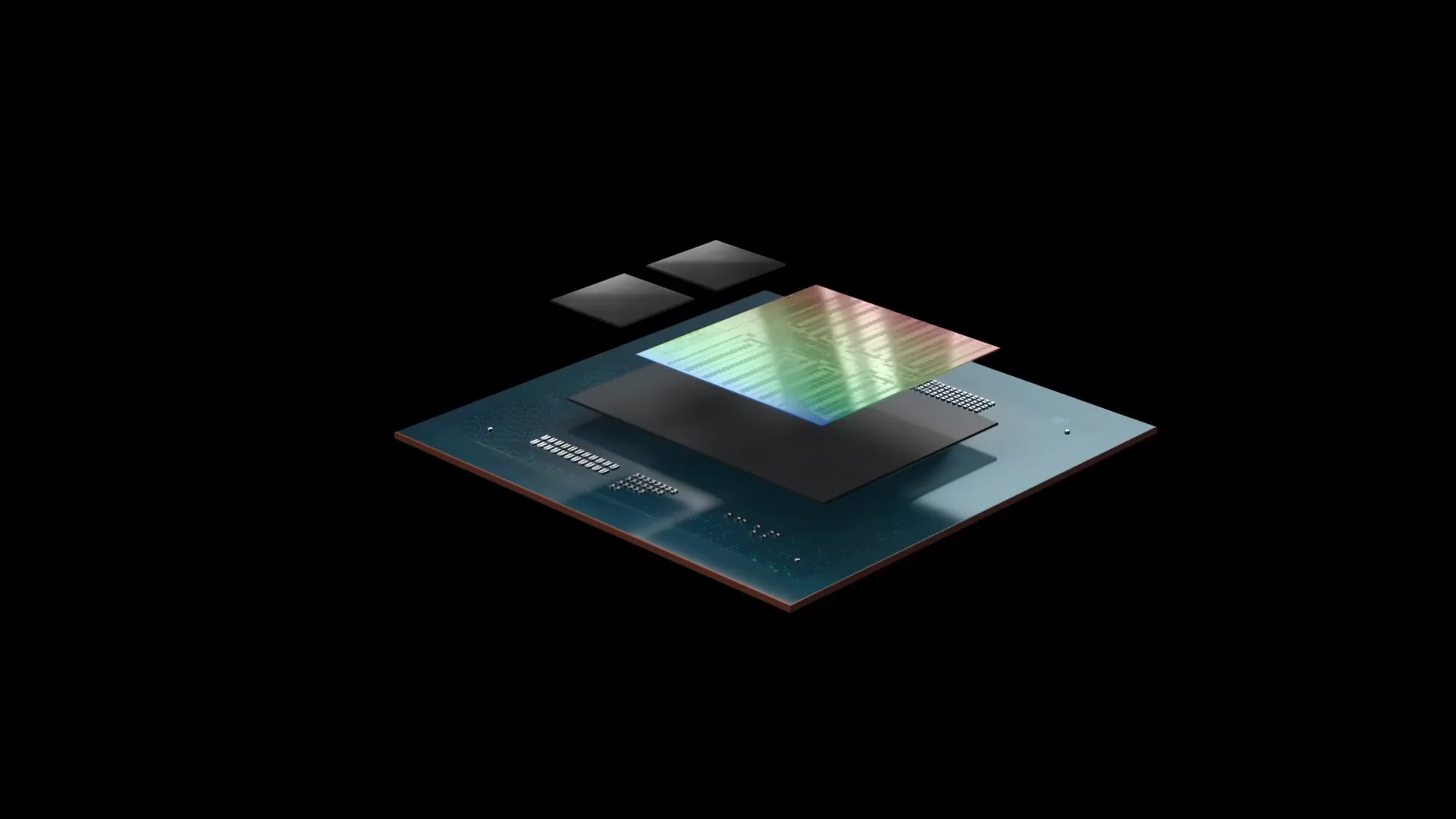

Advanced packaging technology

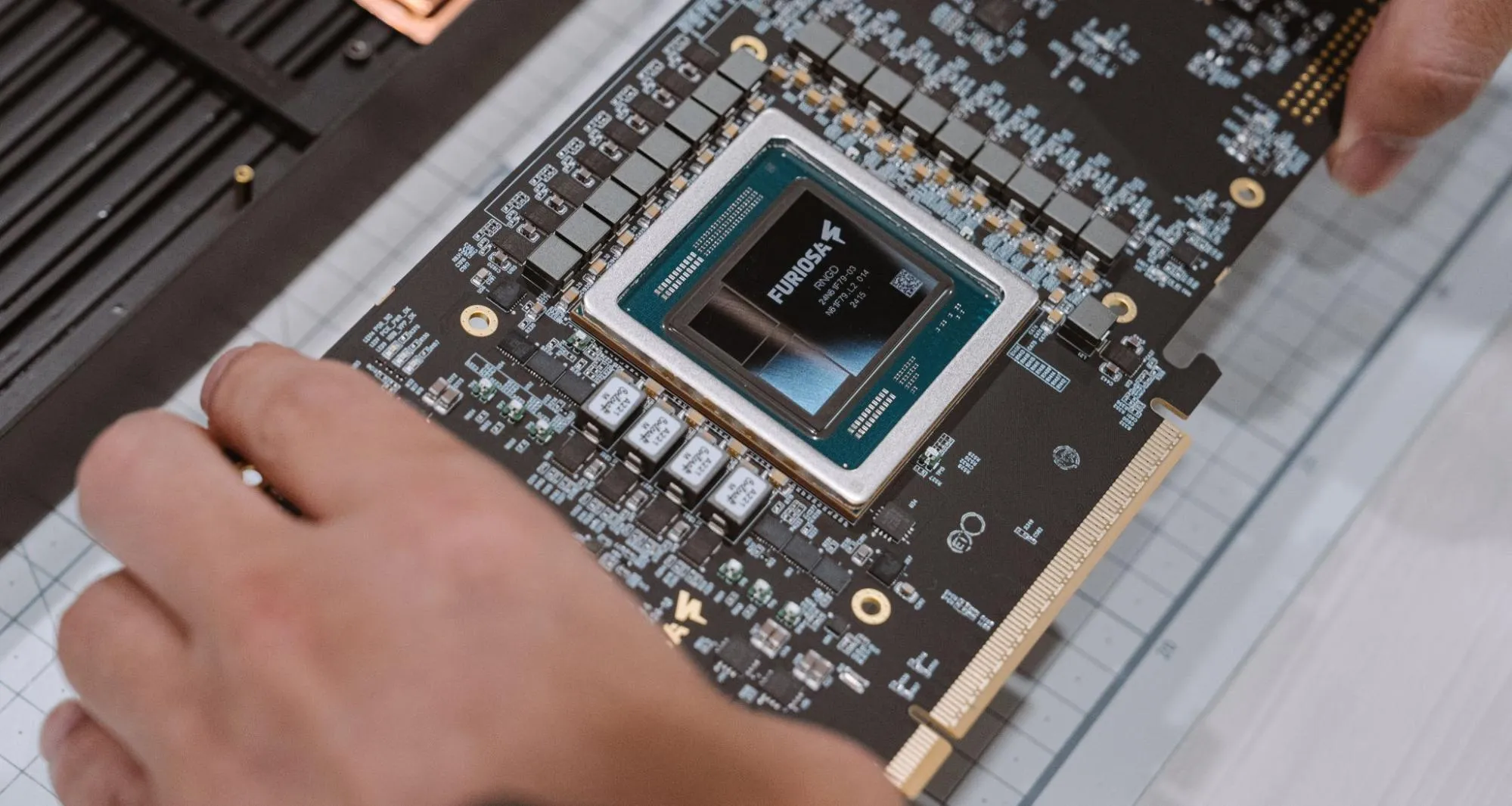

Turnkey AI inference you can own today

SOFTWARE FOR LLM DEPLOYMENT

BE IN THE KNOW

Blog

Experience RENEGADE Summit 2026

RNGD outperforms RTX Pro 6000 with the latest SDK