Leading cloud providers, telecoms, and enterprise platforms joined FuriosaAI’s inaugural RENEGADE summit today to announce new commercial products powered by RNGD, our high-performance, energy-efficient AI accelerator for data center inference.

These new collaborations include Samsung SDS’s announcement of a new cloud AI compute service powered by RNGD, the first-ever “NPU-as-a-Service” (NPUaaS) offering by a cloud service provider (CSP) in Korea. This service represents a major milestone in Samsung’s broader full-stack AI strategy, providing enterprise customers with high-efficiency compute at scale.

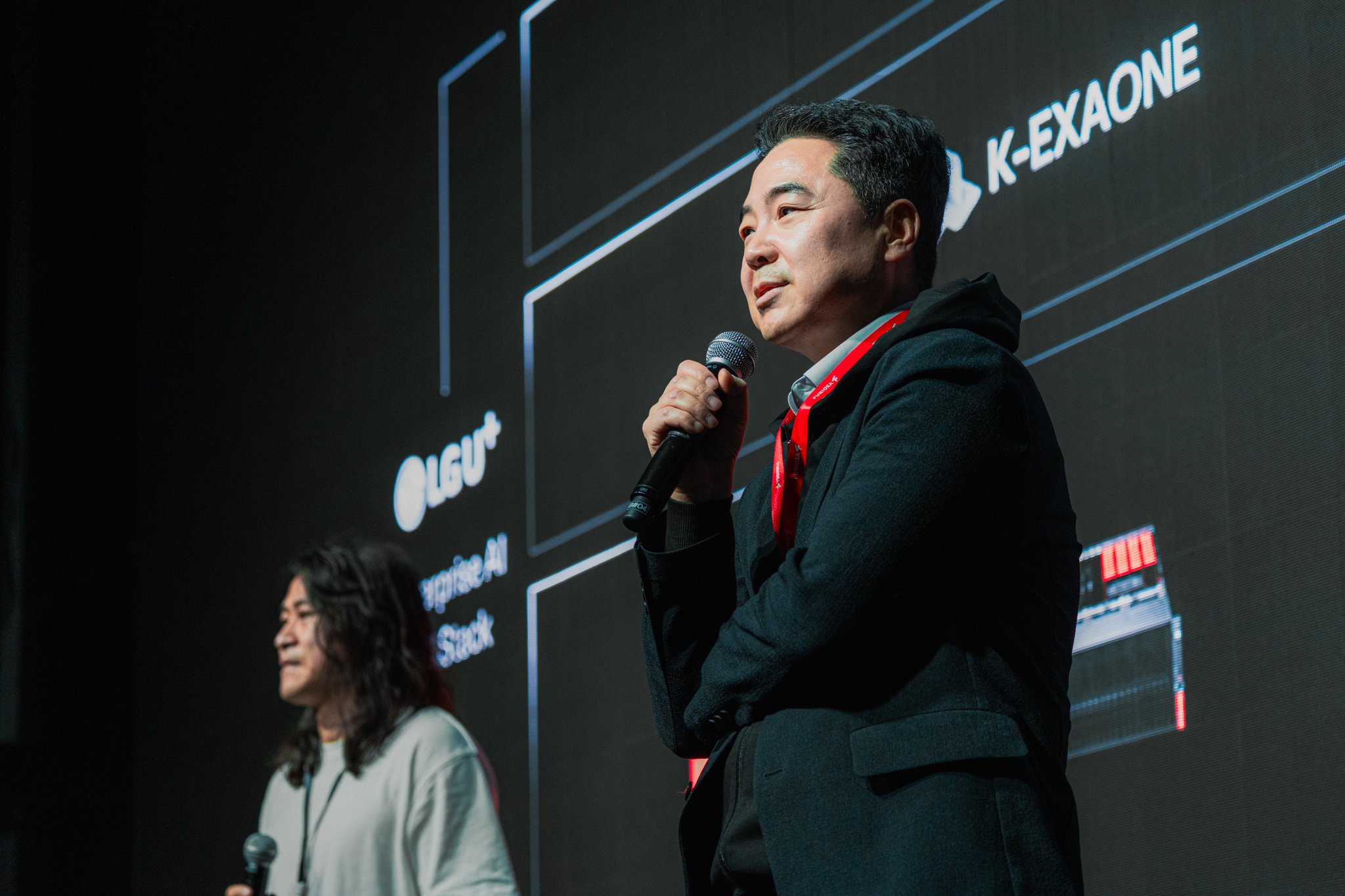

Today’s RENEGADE summit in Seoul convened global technology leaders, ecosystem partners, and policymakers to discuss the future of sustainable, high-performance AI infrastructure.

Scaling AI with new global partners

RNGD’s shift from pilot programs to large-scale commercial deployment is accelerating across the global AI landscape. In addition to Samsung SDS, RENEGADE showcased a diverse ecosystem of partners bringing RNGD-powered solutions to market:

- Anexia: The European cloud infrastructure provider is partnering with Furiosa to integrate RNGD into its global service offerings, expanding our footprint in the EMEA market.

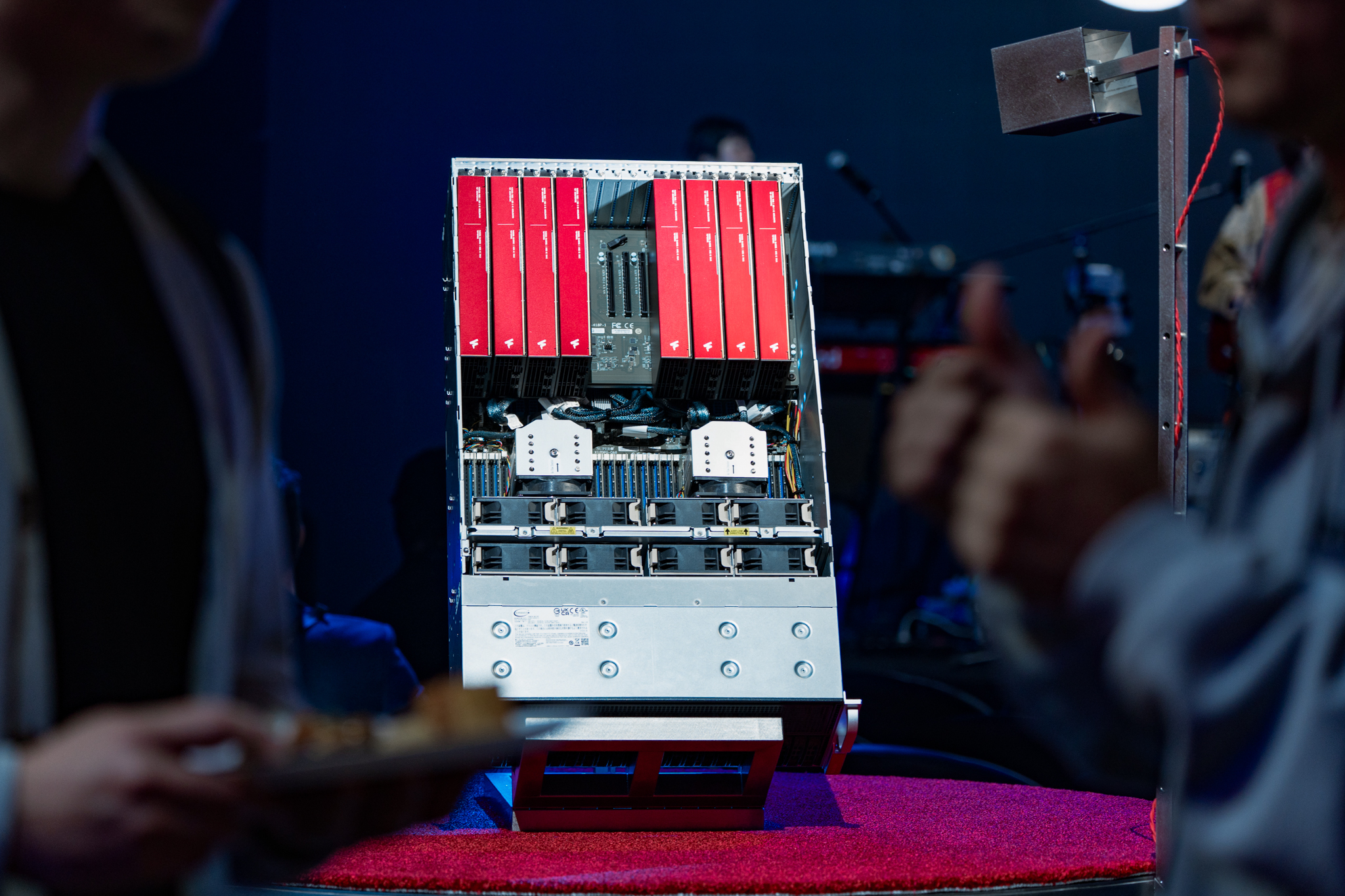

- Baro AI: The Korean AI infrastructure company showcased an AI server integrating RNGD with their POSEIDON liquid-cooling architecture, which ensures maximum computational throughput and absolute stability.

- Creverse: The Korean education technology company is deploying RNGD to power its AI-driven HUMMINGo platform.

- flexgrid.cloud: flexgrid.cloud delivers sovereign AI compute at the edge, standardized on RNGD to power air-cooled metro AI data centers built for inference in power-constrained urban environments worldwide, without liquid cooling or the overhead of traditional GPU-centric infrastructure.

- Lablup: The Korean AI infrastructure company has integrated RNGD into their Backend.ai platform for managing AI workloads across diverse AI accelerators.

- LG AI Research / LG U+: FuriosaAI and LG U+ have launched the Sovereign AI Appliance, a “ready-to-run” AI inference solution integrating a foundational model, platform, and AI silicon developed in Korea.

- MangoBoost: The Korean silicon company has partnered with Furiosa to develop the world's first NPU-DPU scale-out architecture, leveraging a 400G RDMA fabric to enable linear multi-node scaling for large-scale LLM inference.

- MegazoneCloud: The Korean cloud managed service provider is launching a new cloud compute project powered by RNGD to meet surging regional demand.

- Nota AI: The AI optimization company demonstrated Vision-Language Models (VLMs) running on RNGD for real-time industrial safety alerts and natural language video search.

- Samsung SDS: Beginning this July, Samsung SDS will launch a new RNGD-powered NPUaaS on the Samsung Cloud Platform. Customers can access RNGD in a range of card configurations, fully integrated with the platform’s virtualization, storage, and networking layers.

- Seoul National University: SNU is using RNGD to power a Retrieval-Augmented Generation (RAG) platform that combines massive curated databases with real-time web data.

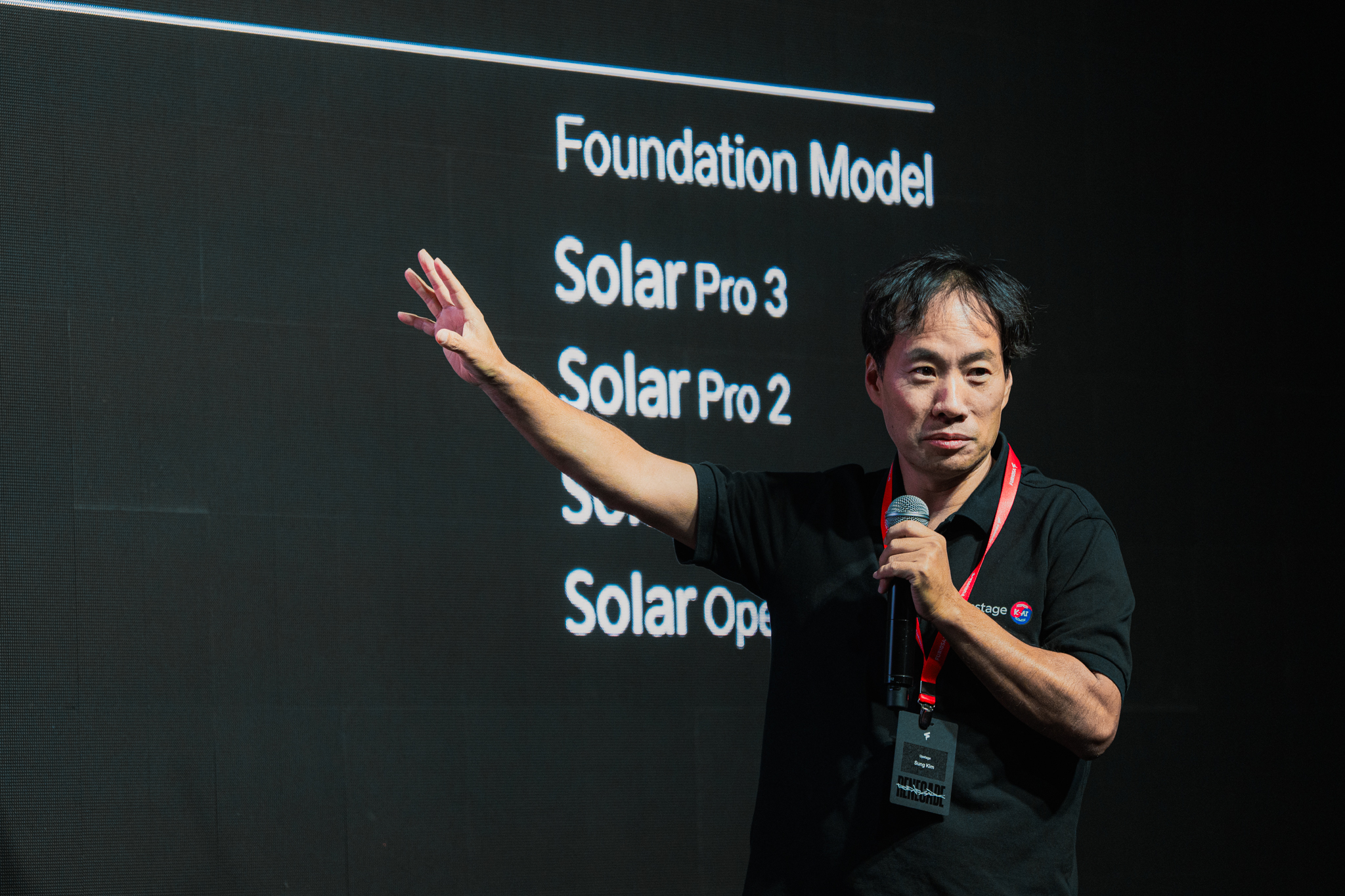

- Upstage: The Korean AI lab is partnering with Furiosa to deploy their Solar family of foundation models on RNGD for a wide range of applications.

- WISEnut: The AI services company is integrating RNGD with their agent-specialized LLM WISE LLOA and RAG solution WISE iRAG to efficiently deploy an “all-in-one” AI search agent.

Real world performance advantage: 2x more users per kW

The commercial momentum is backed by detailed performance data. Our latest benchmarks, released with SDK 2026.1, show that RNGD delivers a decisive economic advantage for Large Language Model (LLM) inference.

In tests using the Qwen3-32B (FP8) model under a strict Service-Level Agreement (SLO) of 20-40 Tokens/Second per user, RNGD served 1.8x to 2x more users per kilowatt than NVIDIA’s RTX PRO 6000. By operating at a chip TDP of just 180W (compared to 600W for advanced GPUs), RNGD enables a 30% or greater reduction in Total Cost of Ownership (TCO).

Efficiency through chip architecture

These results are driven by our proprietary Tensor Contraction Processor (TCP) architecture. Unlike traditional GPUs that map multidimensional AI math onto fixed matrix units, TCP treats tensor contraction as a native hardware primitive.

This structural efficiency allows enterprises to scale their AI workloads without the "liquid-cooling tax" or expensive data center retrofits required by GPU hardware. Combined with a mature SDK supporting vLLM, Kubernetes, and torch.compile,

RNGD is ready for immediate enterprise-grade deployment.

Reach out here to learn more.

.jpg)

.jpg)

Written by

The Furiosa Team